AI: Industry Guide

What is AI and who are the top three major players?

AI Hype Explained

AI has become the buzzword of the world since the backend of 2023, but what is the hype all about? What does it actually do? What are its use cases? These are questions asked by many. Most importantly, why are companies pouring billions worth of CapEx into it?

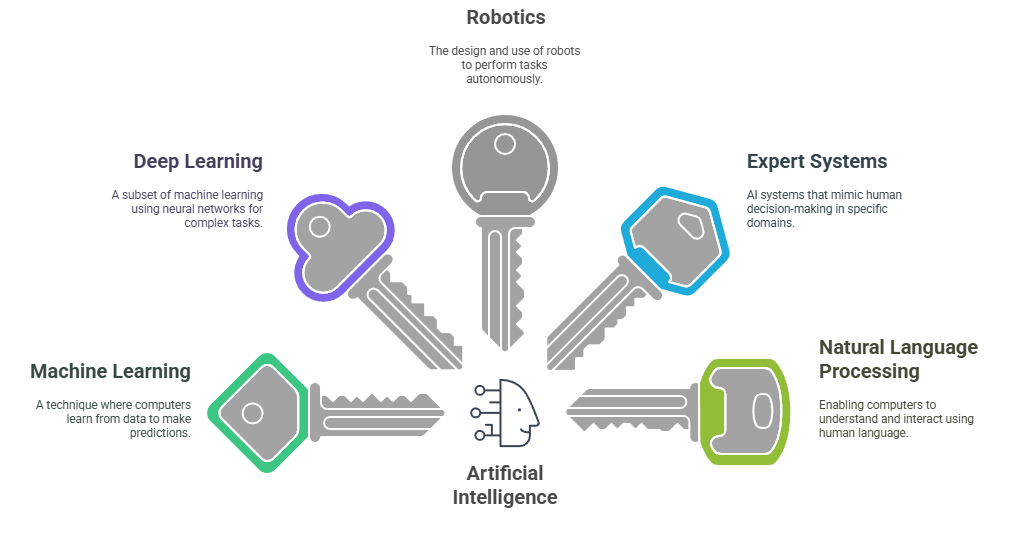

Well, first let’s dive into what AI (Artificial Intelligence) is. AI encompasses a broad spectrum of techniques and methodologies aimed at creating systems capable of performing tasks that typically require human intelligence.

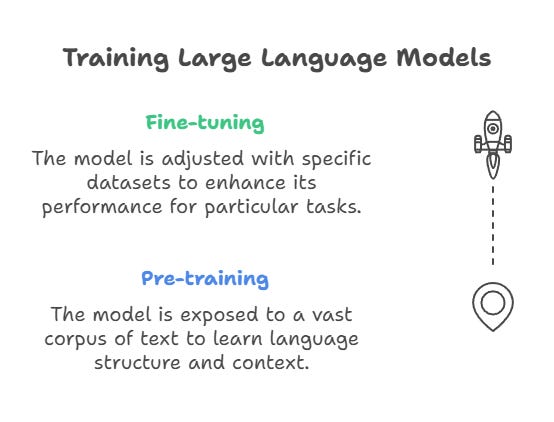

Within this subset of technologies, deep learning gave birth to LLMs (Large Language Models) which are the root of the hype in AI today. LLMs are built using neural networks, computational systems inspired by the way neurons in the human brain function. These networks consist of layers of connected units, or "artificial neurons" that process data. During training, the model learns patterns and relationships in the training data by adjusting its internal parameters using techniques like backpropagation. Once trained, the model is evaluated on testing data to measure its performance and ensure it can generalize to new, unseen inputs.

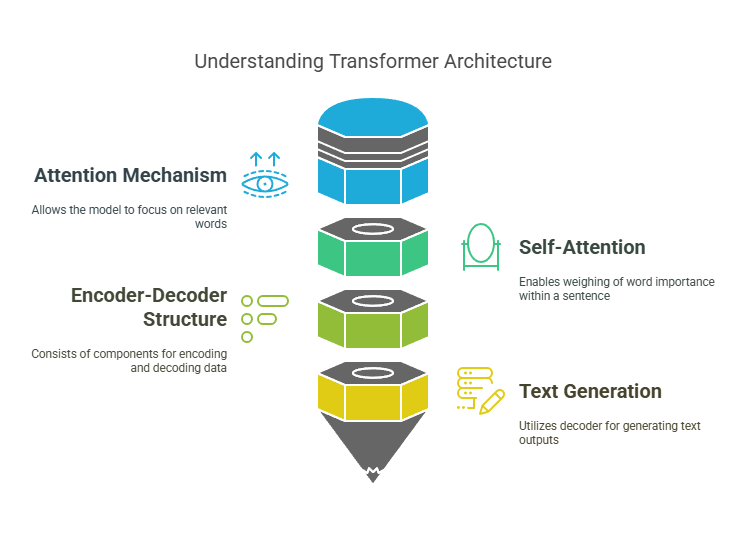

At the core of large language models is the transformer architecture, introduced in the groundbreaking paper "Attention Is All You Need" by 8 Google employees in 2017. This architecture employs mechanisms called attention and self-attention, which enable the model to dynamically focus on the most relevant words in a sentence or context. By doing so, it captures relationships between words, even if they are far apart, making it particularly effective for language tasks.

The transformer originally consists of an encoder-decoder structure: the encoder processes input data into representations, and the decoder generates output based on those representations. However, many LLMs, such as GPT (Generative Pre-trained Transformer), use only the decoder portion to focus exclusively on generating coherent and contextually relevant text.

The reason this was groundbreaking is that transformers represented a significant advancement in natural language processing, enabling LLMs to understand and generate human language with unprecedented accuracy and efficiency. These models have even progressed into generating videos and images. The hope is that these models will be able to improve productivity therefore contributing to GDP growth.

There are many use cases of AI across different industries, below are a few examples:

Healthcare

Medical Imaging: Analysis of medical images (like X-rays and MRIs) to detect anomalies such as tumors or fractures with high accuracy.

Predictive Analytics: Predicting patient outcomes and disease outbreaks by analyzing historical data, enabling proactive healthcare measures.

Virtual Health Assistants: Chatbots and virtual assistants can provide patients with information, schedule appointments, and even offer preliminary diagnoses.

Finance

Fraud Detection: Analyzing transaction patterns to identify and flag potentially fraudulent activities in real time.

Algorithmic Trading: AI algorithms can process vast amounts of market data to make trading decisions faster than human traders.

Personalized Banking: AI-driven chatbots can assist customers with their banking needs, providing tailored financial advice based on individual spending habits.

Retail

Recommendation Systems: Analyzing customer behaviour to provide personalized product recommendations, enhancing the shopping experience.

Inventory Management: AI can predict demand trends, helping retailers manage stock levels efficiently and reduce waste.

Customer Service: AI-powered chatbots can handle customer inquiries, providing instant support and freeing up the human workforce for more complex issues.

The Three Major Players

Building, maintaining, and using LLMs requires massive computational power due to the vast amounts of data they are trained on, the iterative nature of the training process, and the demands of their deployment. For instance, GPT-4 was trained on an estimated trillions of parameters. Several major players at each step of the supply chain make these advancements possible. Let’s start at the beginning: semiconductors.

NVIDIA

First I’ll begin by explaining the key component.

Semiconductors are essential for building chips because they can act as switches, controlling the flow of electricity. Computers operate in binary (0s and 1s), where '0' represents no current and '1' represents current. Semiconductors enable this by switching on and off, allowing them to express binary states and power computational processes. Without these chips, we would still be stuck in the 20th century. Semiconductors power virtually everything, from radios and refrigerators to phones and computers, making them essential to modern life.

The type of chip that has enabled this momentous growth in AI is the GPU (Graphical Processing Unit). And this is where NVIDIA comes in. Although they didn’t invent GPUs, they certainly made them what they are today. GPUs are specialized processors designed to handle parallel computations efficiently. Parallel processing is the simultaneous execution of multiple computations, enabling faster processing by dividing tasks across multiple cores or processors.

Before the AI craze, NVIDIA was predominantly known amongst gamers as their GPUs were known for high-quality 3D rendering. Then in the crypto boom, NVIDIA’s GPUs gained further prominence as they became a popular choice for crypto mining due to their processing power and efficiency. With their advantages now clear, NVIDIA’s importance in AI lies in their GPUs’ ability to handle massive parallel computations, essential for training and running machine learning models. The introduction of CUDA in 2006 expanded GPUs beyond graphics, enabling efficient tasks like matrix multiplications and neural network training. Today, NVIDIA’s AI-focused hardware forms the backbone of AI infrastructure, powering data centres and driving large-scale model advancements. While there’s much more to cover about NVIDIA, I’ll save that for another post to keep things focused for now.

Of course, none of NVIDIA’s groundbreaking GPUs would exist without the advanced semiconductor manufacturing that makes them possible. This is where the next big player comes in.

TSMC

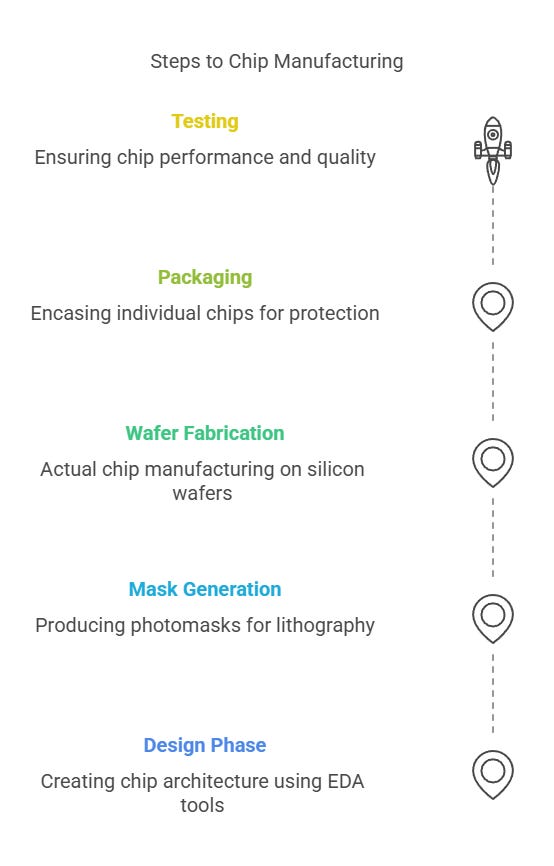

TSMC (Taiwan Semiconductor Manufacturing Company) are responsible for manufacturing the highly advanced chips that NVIDIA and many more companies design. Founded in 1987 in Taiwan, TSMC pioneered the pure-play foundry model, focusing exclusively on manufacturing chips for other companies. Building these chips is extremely complex and there are several stages to it.

The goal of chip design and manufacturing is to make processors as small and powerful as possible, allowing more of them to fit onto a motherboard and maximize performance. TSMC has managed to manufacture chips as small as 3nm. To put 3 nanometers into perspective, if Ant-Man shrunk down to the size of 3 nanometers, he’d be so small that he could walk through the double helix of a DNA strand with room to spare. At just 3 nanometers, these chips house billions of transistors, enabling unparalleled processing power and energy efficiency for cutting-edge applications like AI and advanced computing. TSMC plans to begin 2nm chip production this year which will push efficiency and performance even further, enabling even more transistors on a chip, reducing energy consumption, and unlocking new possibilities for AI and high-performance computing.

The next major player behind TSMC’s rapid manufacturing advances plays a crucial role at the Wafer Fabrication stage.

ASML

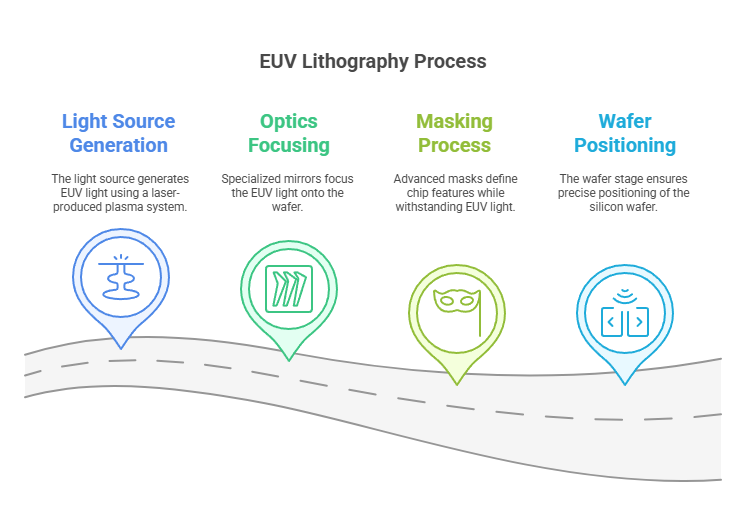

ASML is a Dutch company arguably responsible for the high quality of chips being designed and manufactured today. Their groundbreaking EUV (Extreme Ultraviolet) lithography technology has enabled the production of the smallest and most powerful chips. The wafer fabrication stage of chip manufacturing is where the intricate circuits that make up a chip are created on a silicon wafer. This process involves multiple steps, including photolithography, etching, deposition, and doping, to build layers of transistors and connections, forming the functional components of the chip.

The EUV process is complex and involves using extremely short wavelengths of light to etch incredibly precise patterns onto silicon wafers.

The latest ASML machine would set you back €350million. Expensive right? They also weigh about 165 tonnes (165k kg). Although the exact amount of orders for the latest machine hasn’t been disclosed, it’s estimated that ASML has received between 10-20 orders. Without ASML's powerful machines, the chips we have today wouldn’t be possible.

Conclusion

I hope that I’ve been successful in simplifying the complex technology mainly behind the strong bull run that we’ve been seeing in equities. Of course, there are many more major players than the three I have covered. However, without NVIDIA, TSMC and ASML, the rapid advancements in AI (which stems from the powerful infrastructure) wouldn’t be possible.

Understanding the driving forces behind market trends is crucial, particularly in a cyclical industry like semiconductors. By grasping the fundamentals of the technology and the pivotal roles these companies play, investors can better appreciate their significance in driving growth in equities.

Thanks for reading, please feel free to reach out if you have any questions!